logistic_regression.md 9.1 KB

Federated Logistic Regression

Logistic Regression(LR) is a widely used statistic model for classification problems. FATE provided two modes of federated LR: Homogeneous LR (HomoLR) and Heterogeneous LR (HeteroLR and Hetero_SSHE_LR).

Below lists features of each LR models:

| Linear Model | Multiclass(OVR) | Arbiter-less Training | Weighted Training | Multi-Host | Cross Validation | Warm-Start/CheckPoint |

|---|---|---|---|---|---|---|

| Hetero LR | ✓ | ✗ | ✓ | ✓ | ✓ | ✓ |

| Hetero SSHELR | ✓ | ✓ | ✓ | ✗ | ✓ | ✓ |

| Homo LR | ✓ | ✗ | ✓ | ✓ | ✓ | ✓ |

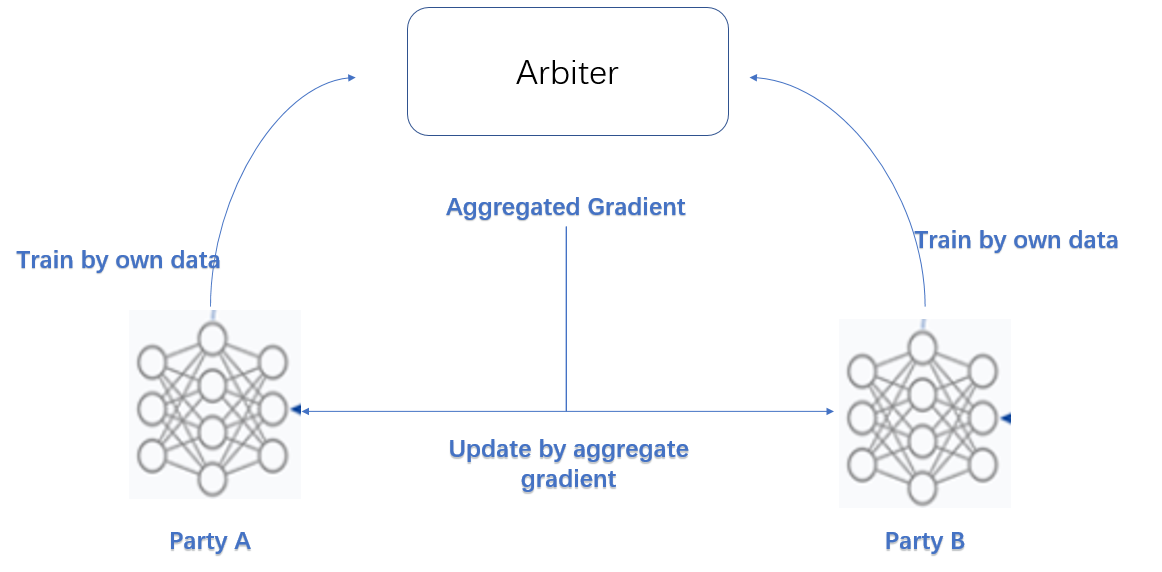

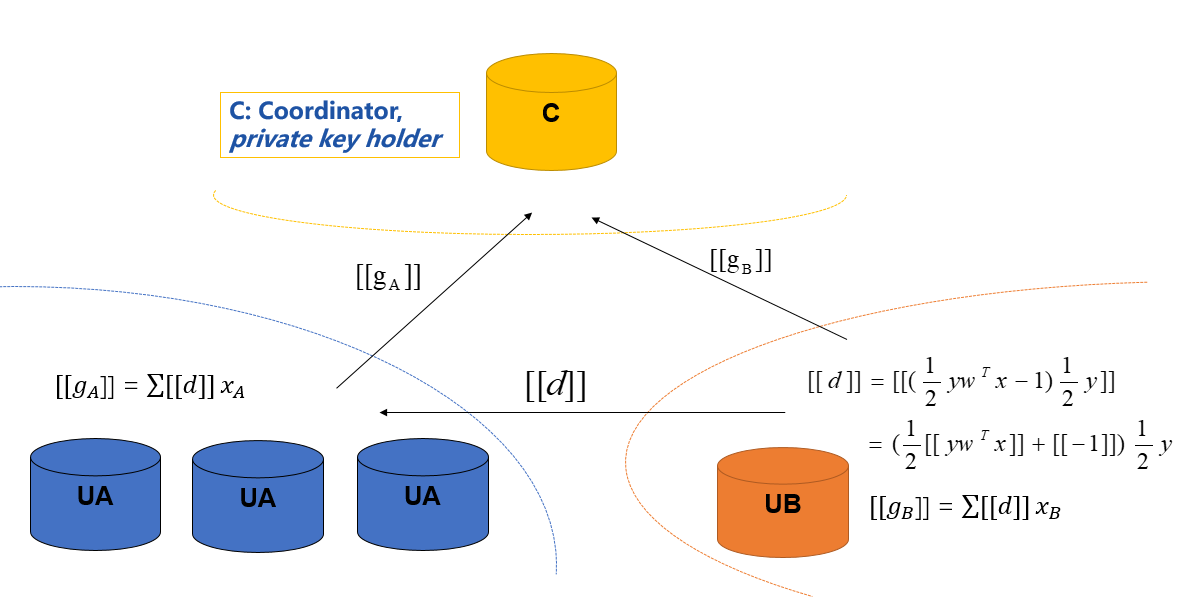

We simplified the federation process into three parties. Party A represents Guest, party B represents Host while party C, which also known as "Arbiter", is a third party that holds a private key for each party and work as a coordinator. (Hetero_SSHE_LR have not "Arbiter" role)

Homogeneous LR

As the name suggested, in HomoLR, the feature spaces of guest and hosts are identical. An optional encryption mode for computing gradients is provided for host parties. By doing this, the plain model is not available for this host any more.

Models of Party A and Party B have the same structure. In each iteration, each party trains its model on its own data. After that, all parties upload their encrypted (or plain, depends on your configuration) gradients to arbiter. The arbiter aggregates these gradients to form a federated gradient that will then be distributed to all parties for updating their local models. Similar to traditional LR, the training process will stop when the federated model converges or the whole training process reaches a predefined max-iteration threshold. More details is available in this Practical Secure Aggregation for Privacy-Preserving Machine Learning.

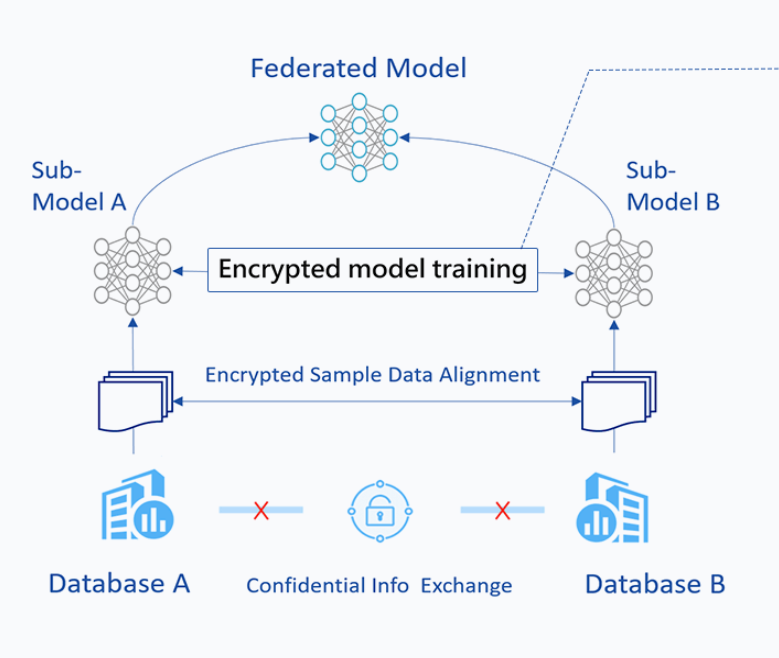

Heterogeneous LR

The HeteroLR carries out the federated learning in a different way. As shown in Figure 2, A sample alignment process is conducted before training. This sample alignment process is to identify overlapping samples stored in databases of the two involved parties. The federated model is built based on those overlapping samples. The whole sample alignment process will not leak confidential information (e.g., sample ids) on the two parties since it is conducted in an encrypted way.

In the training process, party A and party B compute out the elements needed for final gradients. Arbiter aggregate them and compute out the gradient and then transfer back to each party. More details is available in this: Private federated learning on vertically partitioned data via entity resolution and additively homomorphic encryption.

Multi-host hetero-lr

For multi-host scenario, the gradient computation still keep the same as single-host case. However, we use the second-norm of the difference of model weights between two consecutive iterations as the convergence criterion. Since the arbiter can obtain the completed model weight, the convergence decision is happening in Arbiter.

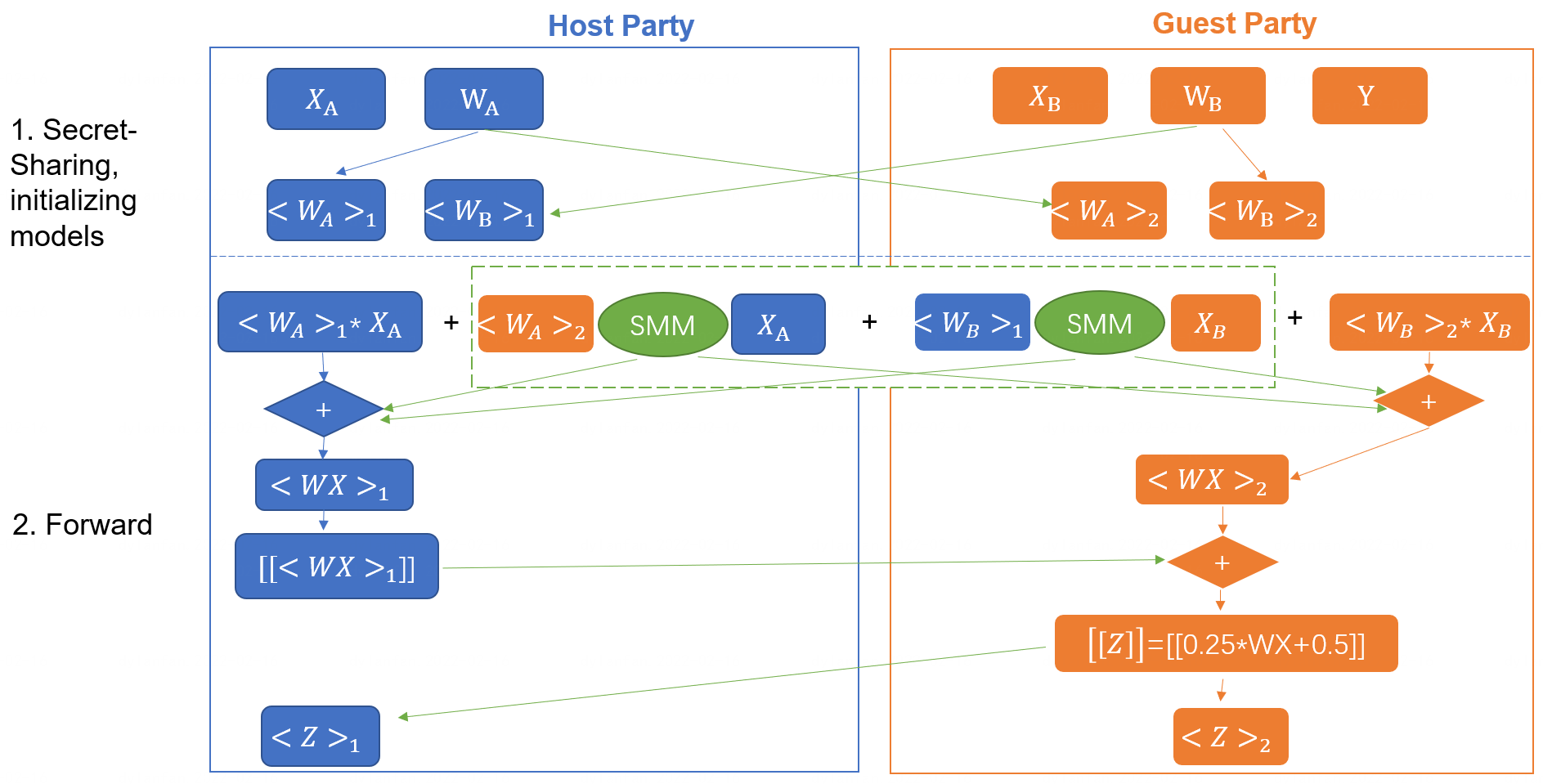

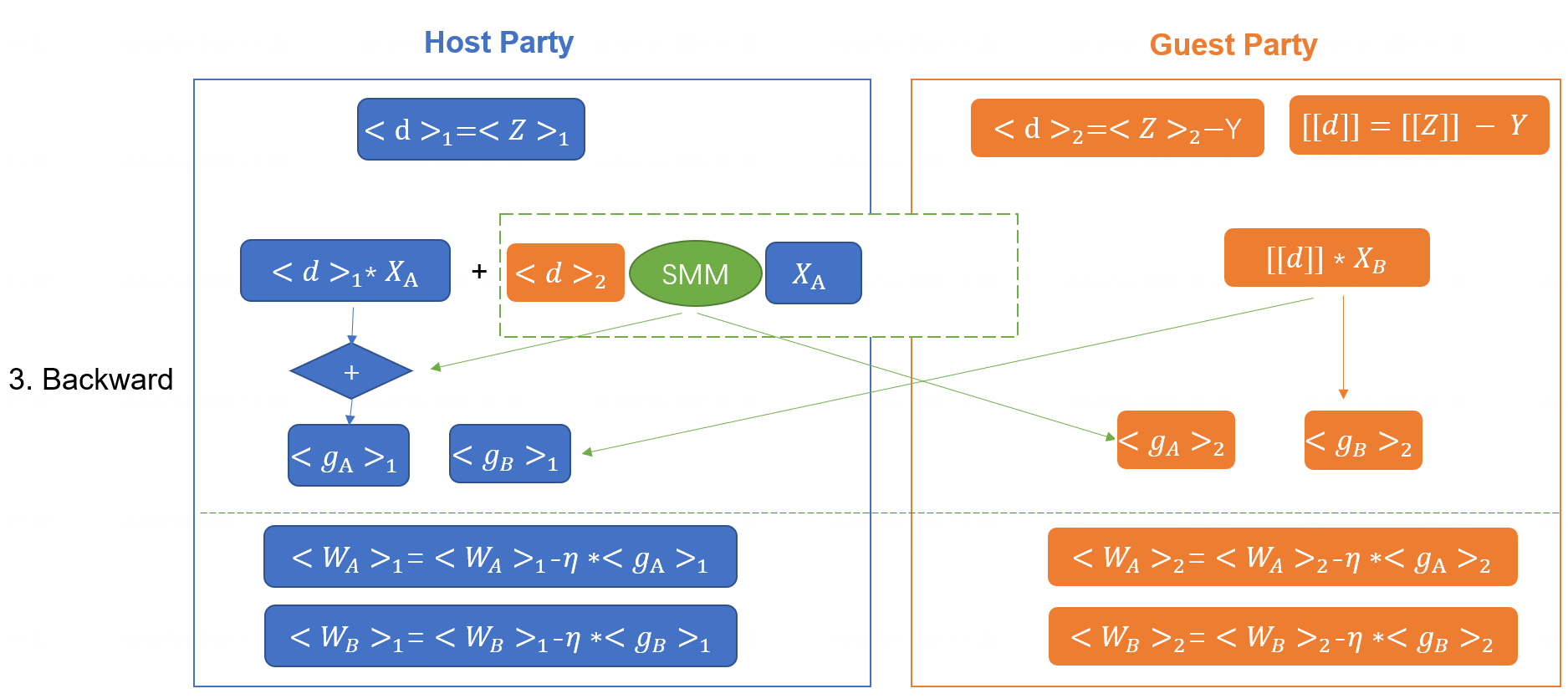

Heterogeneous SSHE Logistic Regression

FATE implements a heterogeneous logistic regression without arbiter role

called for hetero_sshe_lr. More details is available in this

following paper: When Homomorphic Encryption Marries Secret Sharing:

Secure Large-Scale Sparse Logistic Regression and Applications

in Risk Control.

We have also made some optimization so that the code may not exactly

same with this paper.

The training process could be described as the

following: forward and backward process.

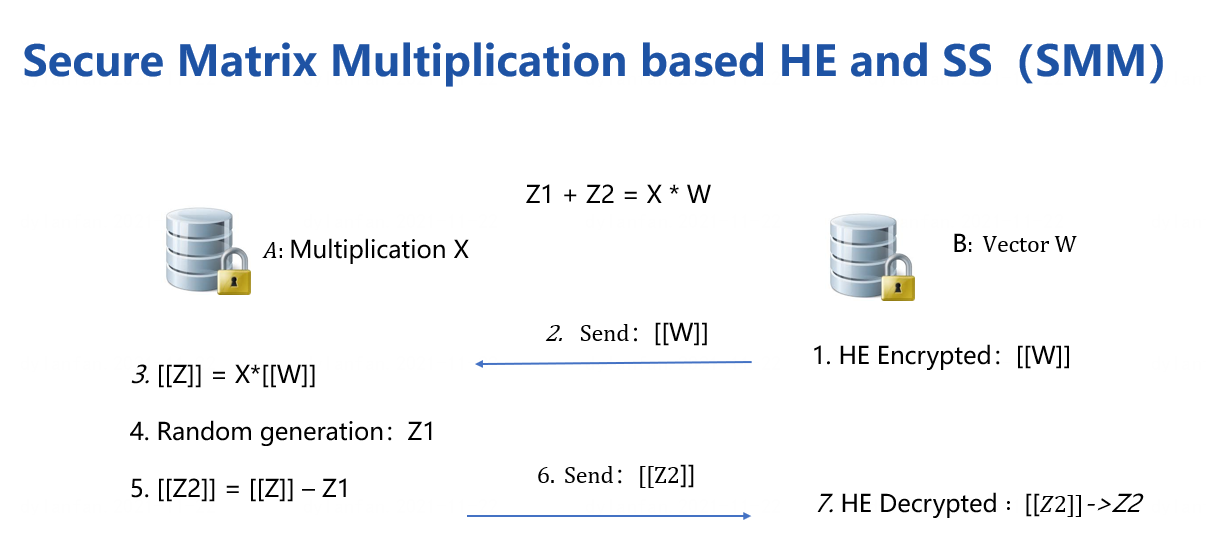

The training process is based secure matrix multiplication protocol(SMM),

which HE and Secret-Sharing hybrid protocol is included.

Features

- Both Homo-LR and Hetero-LR

L1 & L2 regularization

Mini-batch mechanism

Weighted training

Six optimization method:

sgd

gradient descent with arbitrary batch sizermsprop

RMSPropadam

Adamadagrad

AdaGradnesterov_momentum_sgd

Nesterov MomentumThree converge criteria:

diff

Use difference of loss between two iterations, not available for multi-host training;abs

use the absolute value of loss;weight_diff

use difference of model weightsSupport multi-host modeling task.

Support validation for every arbitrary iterations

Learning rate decay mechanism

- Homo-LR extra features

Two Encryption mode

"Paillier" mode

Host will not get clear text model. When using encryption mode, "sgd" optimizer is supported only.Non-encryption mode

Everything is in clear text.Secure aggregation mechanism used when more aggregating models

Support aggregate for every arbitrary iterations.

Support FedProx mechanism. More details is available in this Federated Optimization in Heterogeneous Networks.

- Hetero-LR extra features

- Support different encrypt-mode to balance speed and security

- Support OneVeRest

- When modeling a multi-host task, "weight_diff" converge criteria > is supported only.

- Support sparse format data

- Support early-stopping mechanism

- Support setting arbitrary metrics for validation during training

- Support stepwise. For details on stepwise mode, please refer stepwise.

Support batch shuffle and batch masked strategy.

- Hetero-SSHE-LR extra features > 1. Support different encrypt-mode to balance speed and security > 2. Support OneVeRest > 3. Support early-stopping mechanism > 4. Support setting arbitrary metrics for validation during training > 5. Support model encryption with host model